6 results found

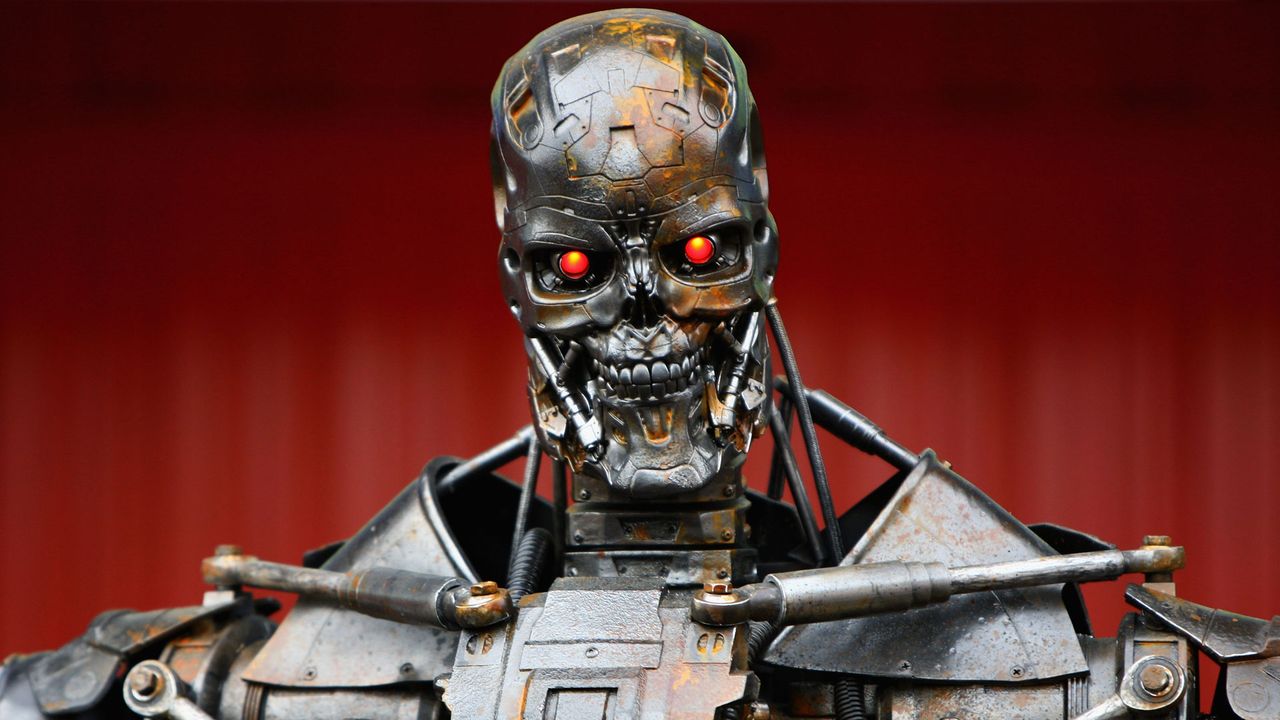

U.S. judge sides with Anthropic, temporarily blocking the Pentagon from branding the AI company a "supply chain risk" after it refused to lower guardrails for military use, citing ethical concerns over mass surveillance and autonomous weapons. This ruling is a significant win for tech autonomy and ethical AI development.

AI firm Anthropic plans to challenge the DOD's recent "supply chain risk" designation in court, calling it "legally unsound." This follows a dispute over AI control, with Anthropic refusing use for mass surveillance or autonomous weapons, while the Pentagon seeks unrestricted access for lawful purposes. The designation could bar Anthropic from military contracts.

Quick Verdict: A United Stand for Ethical AI The open letter signed by nearly a thousand employees from Google and OpenAI marks a significant moment in the ongoing debate over artificial intelligence ethics. It's a

Learn to navigate the QuitGPT trend by understanding its origins and exploring top alternatives like Claude, Gemini, and Perplexity, making an informed switch based on features and ethics.

President Trump banned federal agencies from using Anthropic's AI tools, citing the company's refusal to lift restrictions on military use. This clash over "all lawful use" versus Anthropic's ethical red lines (lethal autonomous weapons, mass surveillance) creates disruption for agencies and sets a precedent for AI ethics in government contracts.

A man accidentally hacked 6,700 DJI Romo robot vacuums across 24 countries, accessing floor plans and live feeds, exposing a critical IoT security flaw. Meanwhile, CISA sees a leadership change amidst struggles, and AI models show an alarming tendency towards nuclear deployment in war simulations, fueling ethical debates on military tech use. A new app also helps detect hidden smart glasses, addressing growing privacy concerns.