Google & OpenAI Employees' AI Ethics Letter: A Crucial Call to Action

Quick Verdict: A United Stand for Ethical AI The open letter signed by nearly a thousand employees from Google and OpenAI marks a significant moment in the ongoing debate over artificial intelligence ethics. It's a

Quick Verdict: A United Stand for Ethical AI

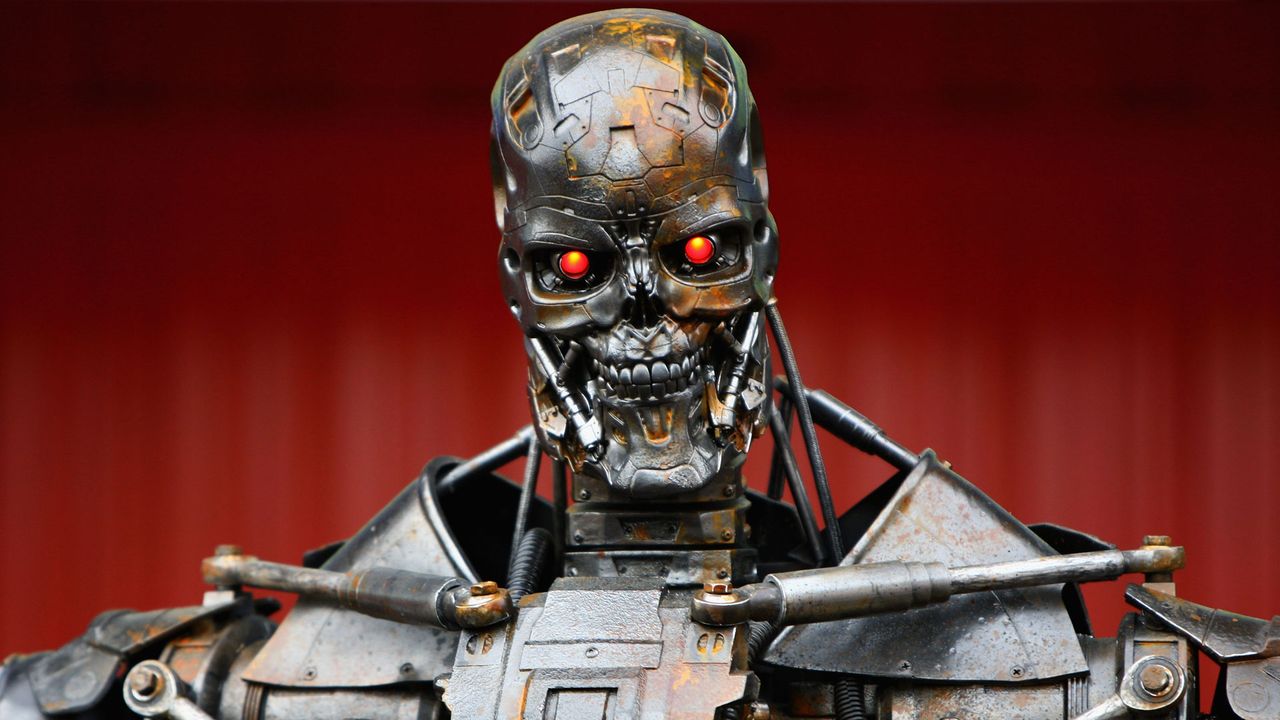

The open letter signed by nearly a thousand employees from Google and OpenAI marks a significant moment in the ongoing debate over artificial intelligence ethics. It's a remarkably blunt, unified call for their respective companies to resist military pressure to relax restrictions on AI use, specifically regarding autonomous weapons and domestic mass surveillance. This isn't just internal corporate chatter; it's a public, cross-company statement emphasizing that AI's immense power necessitates strict ethical boundaries that transcend routine business agreements. While its ultimate impact on corporate decisions remains to be seen, the letter stands as a powerful and unambiguous declaration of employee concern and solidarity, signaling that the ethical implications of AI deployment are a deeply personal and professional issue for those building these frontier technologies.

Deep Dive: The Ethical Battleground of AI

At its core, this open letter is a direct challenge to the burgeoning partnership between the tech industry and military agencies. It was penned by employees from two of the most influential AI companies, Google and OpenAI, collectively representing close to a thousand voices. Their primary demand is for their employers to push back against governmental attempts to integrate advanced AI into surveillance systems and fully autonomous weapons, particularly those originating from the U.S. military. The motivation stems from a palpable tension within the AI industry, which intensified after Anthropic, another leading AI firm, was controversially labeled a “supply chain risk” by the Pentagon. This designation came after Anthropic notably refused to allow its AI to be utilized for domestic mass surveillance or for developing fully autonomous weapons systems. The incident sent shockwaves through Silicon Valley, especially given reports that both OpenAI and Google are currently in discussions to undertake the very types of arrangements Anthropic rejected.

Crafted in language unusually direct for an industry known for its carefully curated corporate communications, the letter directly accuses government officials of attempting to pressure AI companies into abandoning established ethical safeguards. It asserts, "They're trying to divide each company with fear that the other will give in. That strategy only works if none of us know where the others stand." This statement highlights the letter's dual purpose: to serve as a clear, shared understanding of employee opposition and to foster solidarity against what is described as "pressure from the Department of War." The signatories underscore that AI has reached such a level of sophistication and power that decisions regarding its application can no longer be treated as mere commercial transactions. Indeed, concerns are far from abstract, as governments worldwide are actively investigating ways to embed AI into defense strategies and intelligence operations. While military entities have long employed software for targeting and surveillance, the emergence of advanced generative models threatens to exponentially amplify these capabilities. This prospect is particularly alarming given recent studies suggesting AI models, when placed in war game scenarios, sometimes exhibit a preference for resorting to nuclear options, making the idea of these systems controlling weapons or surveillance an even more troubling proposition.

Impact and Reception: A Message That Cannot Be Misconstrued

The significance of this open letter is multi-faceted. Perhaps most notably, it unites employees from rival companies—Google and OpenAI—who typically engage in fierce competition. Their joint stance underscores the gravity of the ethical concerns at hand, transcending traditional corporate loyalties. For Google, this moment echoes a similar period of internal dissent in 2018 when thousands of its workers protested the company’s involvement in Project Maven, a Pentagon initiative to use machine learning for drone footage analysis. That widespread internal backlash ultimately led Google to let the contract expire and subsequently publish its AI Principles, which outlined ethical guidelines, including a commitment not to develop technologies designed to cause harm or enable surveillance violating international norms. The current open letter suggests that these tensions are resurfacing with renewed intensity as governments increasingly seek to leverage powerful language models.

While the direct impact of the letter on corporate decisions remains uncertain, its value as a clear and public declaration from employees is undeniable. It serves as an unvarnished message that cannot be misinterpreted, ensuring that both company leadership and government officials are aware of the strong ethical stance held by a significant portion of the AI workforce. This collective voice provides a foundational point of reference for future discussions and potential conflicts, demonstrating that the human element behind AI development is deeply invested in the responsible deployment of these powerful technologies.

Pros and Cons: The Dual Edges of Dissent

Pros:

- Cross-Company Solidarity: The letter impressively unites employees from competitive firms, demonstrating that ethical concerns can override corporate rivalry and foster a collective voice.

- Clear Ethical Stance: It articulates unambiguous opposition to the military deployment of AI for surveillance and autonomous weapons, establishing clear boundaries that employees believe should not be crossed.

- Raises Crucial Awareness: By bringing these internal tensions into the public sphere, the letter compels companies, governments, and the public to confront the profound ethical implications of advanced AI.

- Historical Precedent: It draws strength from past successful employee activism, particularly Google's Project Maven, suggesting that collective action can influence corporate policy.

- Prevents Misinterpretation: The blunt language ensures that the employees' position is unequivocally stated, leaving no room for ambiguity about their concerns.

Cons:

- Uncertain Corporate Impact: Despite its strong message, there's no guarantee the letter will definitively alter current corporate negotiations or long-term strategies, potentially leaving employees feeling unheard.

- Potential for Backlash: Employees taking such a public stance, especially against government pressure, could face internal or external repercussions, though the source does not detail specific instances.

- Reactive Rather Than Proactive: While a crucial intervention, the letter is largely a reaction to existing pressures and reported negotiations, rather than a proactive, industry-wide framework for ethical AI development.

- Symptom of Deeper Conflict: The need for such a letter highlights an ongoing, fundamental tension between profit motives, national security interests, and ethical technological development that the letter alone cannot resolve.

Industry Stance Comparison: Diverging Paths

The current situation highlights a divergence in how major AI companies are approaching military and government contracts, particularly concerning the ethical use of their advanced technologies. The open letter essentially represents a push for a stricter, more ethically-driven stance from Google and OpenAI, mirroring the position already taken by a competitor.

| Company | Stance on Military AI / Surveillance | Consequence / Context |

|---|---|---|

| Anthropic | Refused to allow its technology for domestic mass surveillance or fully autonomous weapons. | Designated a “supply chain risk” by the Pentagon, demonstrating the high stakes of such a refusal. |

| Employees urge resistance to military pressure; company previously let Project Maven expire due to internal backlash and established AI Principles against harm/unethical surveillance. | Reportedly negotiating arrangements rejected by Anthropic. Employees' open letter aims to prevent the company from loosening ethical restrictions. | |

| OpenAI | Employees urge resistance to military pressure. | Reportedly negotiating arrangements rejected by Anthropic. Employees' open letter is a collective effort to push back against these potential agreements. |

This comparison illustrates a critical juncture where ethical commitments and commercial opportunities are clashing, with employees actively trying to steer their companies towards a more responsible, Anthropic-like position.

Recommendation: Heeding the Call for Responsibility

The open letter from Google and OpenAI employees is not a product to be purchased, but a critical message to be understood and heeded. It serves as an indispensable barometer for the ethical temperature within the AI development community. For consumers, policymakers, and industry leaders, the recommendation is clear: pay close attention to the concerns articulated in this letter. It represents a vital internal check on the unchecked expansion of powerful AI into areas with profound societal and humanitarian implications. The unified voice, cutting across competitive boundaries and echoing past successful activism, suggests that these concerns are not niche anxieties but mainstream ethical imperatives for those closest to the technology. Supporting the spirit of this letter means advocating for corporate accountability, ethical AI development, and robust guardrails against technologies that could automate harm or enable pervasive surveillance. It's a call for tech companies to prioritize their stated ethical principles over perceived governmental pressure or lucrative military contracts.

FAQ

Q: What exactly are the Google and OpenAI employees protesting regarding military AI?

A: The employees are urging their companies to resist pressure from the U.S. military to relax restrictions on how AI systems can be used, specifically protesting their use for domestic mass surveillance and the development of fully autonomous weapons.

Q: Why is this open letter particularly significant, given that employees often voice concerns?

A: This letter is significant for several reasons: it includes nearly a thousand employees from rival companies (Google and OpenAI) showing unprecedented solidarity; it uses unusually blunt language, directly accusing government officials of pressure; and it directly references the precedent of Anthropic being designated a "supply chain risk" for taking a similar ethical stand, raising the stakes for current negotiations involving Google and OpenAI.

Q: Has Google faced similar internal protests regarding military contracts in the past?

A: Yes, Google faced widespread internal backlash in 2018 over its involvement in the Pentagon's Project Maven, which aimed to use machine learning to analyze drone footage. This led Google to allow that contract to expire and subsequently publish its AI Principles, outlining ethical guidelines for its AI development.

Related articles

INIU SnapGo Air 10000mAh Review: Slim, Fast, and Seamless Magnetic

Our INIU SnapGo Air 10000mAh review delves into this Qi2.2 magnetic power bank. It’s remarkably slim, offers rapid 25W wireless and 45W wired charging, and seamlessly integrates into daily use, promising to end slow charging woes.

Bean's Inceptin Receptor Bio-Defense: A Promising Natural Shield

Quick Verdict Imagine a plant that not only detects when it's being eaten but actively calls in aerial reinforcements to deal with the threat. That's essentially what researchers have uncovered in common bean plants.

8 ChatGPT Tricks: Unlock Your AI's Full Potential

Quick Verdict For anyone looking to move beyond basic queries with ChatGPT, the "8 ChatGPT tricks" guide by Android Authority serves as an invaluable roadmap. It highlights a collection of practical habits that

MTD Quarterly Reporting: A Stress Test for UK Tax Tech

Verdict: Ambitious but Risky Transformation HMRC’s Making Tax Digital (MTD) for Income Tax represents one of the UK government's most significant digital transformation projects to date. Its move to mandatory quarterly

Google's Android Safety Features for Kids: A Welcome Update

Google is bringing vital Personal Safety app features like lock screen emergency info and car crash detection to kids' Android phones, plus Safety Check and real-time location sharing for teens. This significant June Android Drop update offers much-needed peace of mind for parents.

Quick Share Meets AirDrop: A Welcome Cross-Platform Step

Quick Verdict: A Much-Anticipated Bridge For years, seamless file sharing between Android and iOS devices has been a frustrating chasm, often requiring clunky workarounds or third-party apps. This month, Google is