8 results found

AI firm Anthropic, valued at $380 billion, recently met with Christian leaders in San Francisco for guidance on building a moral chatbot, an unprecedented move in Silicon Valley. This rare consultation highlights the complex ethical questions surrounding advanced AI, including its potential spiritual dimensions.

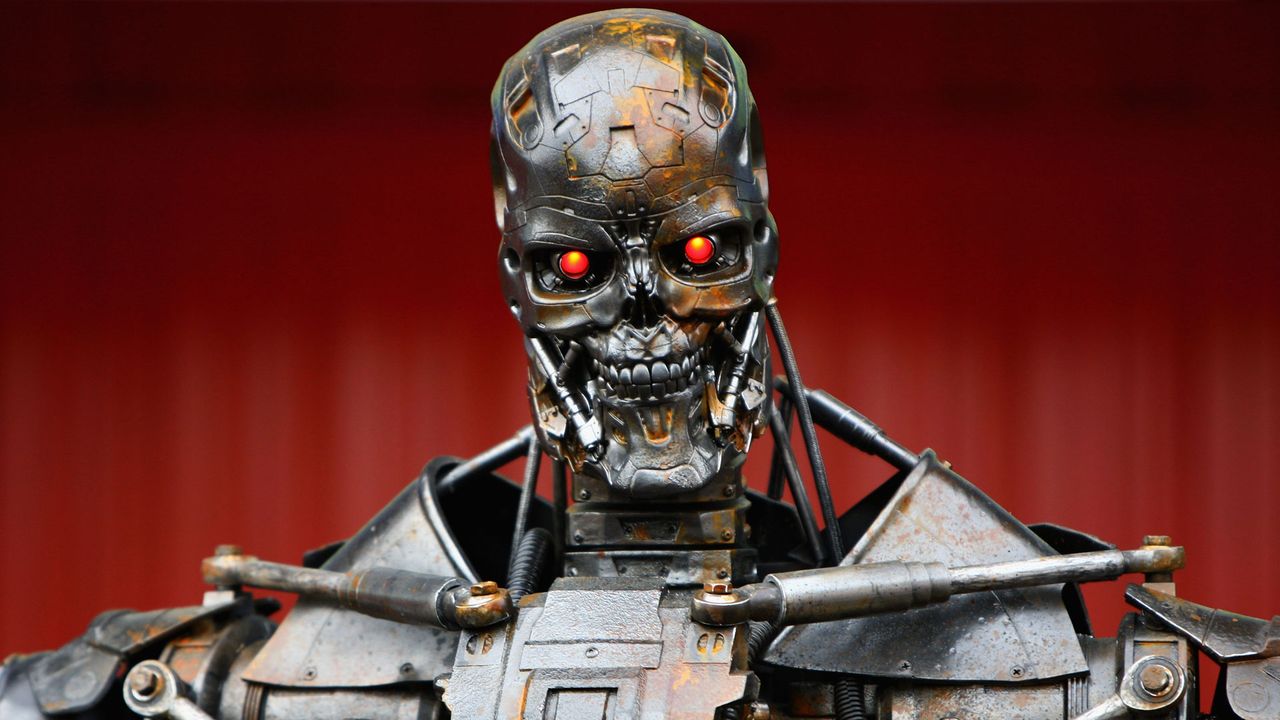

U.S. judge sides with Anthropic, temporarily blocking the Pentagon from branding the AI company a "supply chain risk" after it refused to lower guardrails for military use, citing ethical concerns over mass surveillance and autonomous weapons. This ruling is a significant win for tech autonomy and ethical AI development.

U.S. Senator Elizabeth Warren has condemned the Pentagon's decision to label AI lab Anthropic a "supply-chain risk" as "retaliation." This follows Anthropic's refusal to allow its AI for mass surveillance or lethal autonomous weapons without human oversight. The dispute, which has garnered support for Anthropic from across the tech industry, will see a pivotal court hearing this Tuesday in San Francisco.

Bucharest-based eYou has secured €300,000 in pre-seed funding from Fil Rouge Capital to develop a new European social media platform. Set for a May 2026 launch, eYou aims to combat misinformation and echo chambers with real-time AI fact-checking and transparent, user-editable algorithms. This platform emphasizes European data protection standards and trust-by-design principles.

AI firm Anthropic plans to challenge the DOD's recent "supply chain risk" designation in court, calling it "legally unsound." This follows a dispute over AI control, with Anthropic refusing use for mass surveillance or autonomous weapons, while the Pentagon seeks unrestricted access for lawful purposes. The designation could bar Anthropic from military contracts.

Quick Verdict: A United Stand for Ethical AI The open letter signed by nearly a thousand employees from Google and OpenAI marks a significant moment in the ongoing debate over artificial intelligence ethics. It's a

President Trump banned federal agencies from using Anthropic's AI tools, citing the company's refusal to lift restrictions on military use. This clash over "all lawful use" versus Anthropic's ethical red lines (lethal autonomous weapons, mass surveillance) creates disruption for agencies and sets a precedent for AI ethics in government contracts.

Indie game publisher Finji accuses TikTok of using generative AI to create "racist, sexualized" ads for its games without permission, despite Finji disabling AI features. One ad for 'Usual June' reportedly depicted a character drastically altered from its original design. TikTok initially denied, then acknowledged the issue, citing an unselected "catalog ads format." Finji's CEO expressed disappointment over TikTok's unresolved response and potential brand harm.