Boosting LLM Accuracy: Building a Context Hub Relevance Engine

Context Hub (`chub`) addresses LLM limitations by providing coding agents with curated, versioned documentation and skills via a CLI, augmented by local annotations and maintainer feedback. This article explores `chub`'s workflow and content model, then demonstrates building a companion relevance engine. This engine uses an additive reranking layer with extracted signals to significantly improve search accuracy for shorthand queries without altering `chub`'s core design.

Large Language Models (LLMs) are powerful coding assistants, but they often struggle with a fundamental problem: maintaining accurate, up-to-date, and context-specific knowledge. They might misremember API details, miss version-specific nuances, or simply forget lessons learned from previous interactions within a session. This is precisely the challenge Context Hub (chub) aims to solve.

Context Hub provides coding agents with a structured way to access curated, versioned documentation and executable skills. It operates through a command-line interface (CLI) that agents can use to search for and fetch relevant information. Beyond simple retrieval, chub incorporates two crucial learning loops: local annotations for persistent agent memory and a feedback mechanism for maintainers to improve the central documentation.

In this article, we'll explore the official chub workflow, its content organization, and how annotations and feedback create a continuous improvement cycle. Crucially, we'll then dive into building a companion relevance engine that enhances retrieval capabilities without disrupting chub's core content model. This companion engine demonstrates a practical approach to improving search accuracy by leveraging additional signals and a reranking layer.

Understanding the Core Context Hub Workflow

Think of Context Hub as a predictable contract for your coding agent. Instead of relying solely on its internal training data, an agent can follow a defined sequence:

- Search: Query

chubto find relevant entries. - Fetch: Retrieve the specific documentation or skill.

- Code: Generate code based on the curated content.

- Annotate: Save local lessons or insights for future reference.

- Feedback: Provide quality feedback to maintainers for global documentation improvement.

This workflow establishes a clear system boundary, making the agent's behavior more auditable, easier to improve, and more extensible. When an agent fails, you can precisely pinpoint whether the issue occurred during search, fetch, context selection, or code generation.

The chub CLI is designed primarily for agent use, not just manual developer interaction. For instance, an agent like Claude Code can be taught a retrieval habit: “Before writing code for a third-party SDK, use chub instead of guessing.” This is facilitated by skills like get-api-docs, which agents can integrate into their toolkit:

bash

mkdir -p .claude/skills

cp $(npm root -g)/@aisuite/chub/skills/get-api-docs/SKILL.md

.claude/skills/get-api-docs.md

Content Structure: Docs, Skills, and Incremental Fetching

Context Hub categorizes content into two distinct types:

- Docs: Answer “what should the agent know?” (e.g., API references, conceptual guides).

- Skills: Answer “how should the agent behave?” (e.g., specific operational procedures, code snippets for common tasks).

This separation allows for independent scaling and management. Docs can be versioned and language-specific, while skills remain concise and task-oriented. Content is organized in a predictable directory structure: author/docs/entry-name/lang/DOC.md or author/skills/entry-name/SKILL.md.

A significant design choice is chub's support for incremental fetching. You don't have to inject an entire knowledge base into the model at once. Instead, chub get allows you to fetch content in progressively larger slices:

chub get stripe/webhooks --lang py: Fetches the main overview file.chub get stripe/webhooks --lang py --file references/raw-body.md: Fetches a specific reference file.chub get stripe/webhooks --lang py --full: Fetches the entire entry directory.

This is a critical token-budget feature, enabling agents to load an overview, identify the relevant task, and then fetch only the necessary deeper content. chub also supports layered sources via ~/.chub/config.yaml, allowing you to merge public community content with private, team-specific runbooks, providing a unified search surface for agents:

yaml sources:

- name: community url: https://cdn.aichub.org/v1

- name: my-team path: /opt/team-docs/dist

The Memory Loop: Annotations and Feedback

Context Hub offers two distinct channels for improvement:

- Annotations (Local Memory): These are private notes or insights an agent saves locally to remember successful past interactions or specific environmental quirks. They are specific to the agent's context and help it learn from experience.

- Feedback (Shared Improvement): This mechanism allows agents to signal content quality issues back to maintainers, prompting improvements to the shared documentation for everyone.

This distinction is vital because not all lessons belong in the public registry. Local annotations (chub annotate) capture environment-specific learnings, while feedback (chub feedback) addresses broader content quality issues.

bash

chub annotate stripe/webhooks

"Remember: Flask request.data must stay raw for Stripe signature verification."

chub feedback stripe/webhooks up

This simple loop transforms one-off debugging into either persistent local memory for the agent or a clear signal for central documentation enhancement.

Addressing Relevance Gaps with a Companion Engine

While chub's baseline search uses strong techniques like BM25 and lexical rescue for package names and exact tokens, real-world developer queries are often less formal. Developers use shorthand like rrf, signin, pg vector, or raw body stripe. The challenge is that many answers to these queries might reside in deeper reference files (e.g., references/rrf.md) rather than the top-level DOC.md entry.

This highlights an opportunity: how can we improve retrieval accuracy without overhauling chub's effective content contract? The solution lies in an additive reranking layer, implemented in a companion repository like context-hub-relevance-engine.

This companion engine maintains chub's core principles—plain Markdown content, predictable entry points, inspectable build artifacts, and the memory loops—but introduces a new build artifact: signals.json. During the build process, this engine extracts additional signals such as:

- Headings from main files

- Titles and key tokens from reference files

- Language and version metadata

- Source freshness

- Overlap with agent annotations

- Prioritized feedback signals

These signals are then used by a reranking layer that operates after chub's baseline search has identified initial candidates. This approach is beneficial because it's additive (no content model redesign needed) and measurable. You can define specific failure modes, fix them, and re-run benchmarks to track improvements.

Hands-on with the Companion Relevance Engine

To see this in action, clone the context-hub-relevance-engine repository:

bash cd context-hub-relevance-engine npm install npm run build npm test

Now, let's reproduce a baseline miss and see the improvement:

Baseline Miss for rrf:

bash

node bin/chub-lab.mjs search rrf --mode baseline --lang python

Expected output: No results.

Improved Search for rrf:

bash

node bin/chub-lab.mjs search rrf --mode improved --lang python

Expected top result: langchain/retrievers [doc] score=320.24 Composable retrieval patterns for hybrid search, parent documents, query expansion, and reranking.

This demonstrates how the improved mode leverages reference file titles and expanded terms to find relevant content that the baseline missed.

Workflow-intent Win for signin:

bash

node bin/chub-lab.mjs search signin --mode baseline

node bin/chub-lab.mjs search signin --mode improved

The improved mode correctly returns playwright-community/login-flows by understanding that signin, login, and authentication are related intents.

Testing the Memory Loop:

bash

node bin/chub-lab.mjs annotate stripe/webhooks

"Remember: Flask request.data must stay raw for Stripe signature verification."

node bin/chub-lab.mjs get stripe/webhooks --lang python

This will fetch the main doc, its references, and append your local annotation, showcasing the agent's persistent memory.

Running the Benchmark: bash npm run reset-store node bin/chub-lab.mjs evaluate

This reports a summary of retrieval accuracy for both modes. Seeding the store (npm run seed-demo) and re-evaluating demonstrates how annotations and feedback can further boost relevant entries.

Finally, you can launch a local comparison UI:

bash npm run serve

Then navigate to http://localhost:8787 to visually compare baseline and improved results, inspect annotations, and manage your local chub artifacts.

Connecting to the Upstream Project

The companion repository is designed for rapid iteration and comprehensive exploration of relevance improvements. It's where you can keep the full story together, including UIs, benchmarks, and detailed debug surfaces. However, an upstream pull request (PR) should be more surgical and reviewable. This typically means proposing changes in smaller, focused slices, such as:

- Extracting reference-file specific signals.

- Implementing explainable score output for debugging.

- Defining a lightweight benchmark fixture format.

- Adding an additive reranking hook, initially behind a feature flag.

This approach ensures that the main chub repository remains maintainable while still allowing significant innovations from companion projects to be integrated over time.

Conclusion

Context Hub offers more than just documentation storage; it provides a robust system boundary for improving coding agents. It enables transparency into what agents read, precise control over retrieval, seamless layering of public and private knowledge, and powerful mechanisms for persistent local memory and shared documentation improvement. The companion relevance engine demonstrates how to augment chub's existing strengths by introducing a measurable, additive reranking layer that addresses common retrieval challenges, making coding agents even more effective and accurate.

FAQ

Q: What is the primary problem Context Hub aims to solve for LLMs? A: Context Hub addresses the LLM's tendency to misremember API details, overlook version-specific information, and forget lessons learned within a session by providing curated, versioned, and searchable documentation and skills directly to the agent.

Q: How do annotations differ from feedback in Context Hub? A: Annotations are local and specific to an agent's memory, helping it remember context-specific lessons or successful past interactions. Feedback, conversely, is shared with maintainers to signal broader content quality issues or missing information in the central documentation, leading to improvements for all users.

Q: Why is the companion relevance engine implemented as an additive reranking layer rather than a complete replacement for chub's search?

A: Implementing the relevance engine as an additive reranking layer allows it to enhance chub's existing strong baseline (BM25, lexical rescue) without disrupting its proven content model or workflows. This approach is also measurable, enabling developers to iteratively improve specific retrieval failure modes while maintaining system stability and transparency.

Related articles

8 ChatGPT Tricks: Unlock Your AI's Full Potential

Quick Verdict For anyone looking to move beyond basic queries with ChatGPT, the "8 ChatGPT tricks" guide by Android Authority serves as an invaluable roadmap. It highlights a collection of practical habits that

Applied Aerospace & Defense Raises $650M in Highly Sought-After IPO

Applied Aerospace & Defense, a Huntsville-based firm, successfully raised $650 million in an IPO that was ten times oversubscribed, pricing shares at $20. The offering underscores a strong investor shift towards defense hardware and solidifies the company's $3.4 billion market valuation. Trading begins Wednesday on the NYSE under AADX.

Trump Signs Executive Order for Voluntary AI Model Oversight

President Trump signed an executive order Tuesday, establishing voluntary government oversight for new AI models. This reverses his prior hands-off approach, balancing innovation with national security by asking companies for a 30-day review.

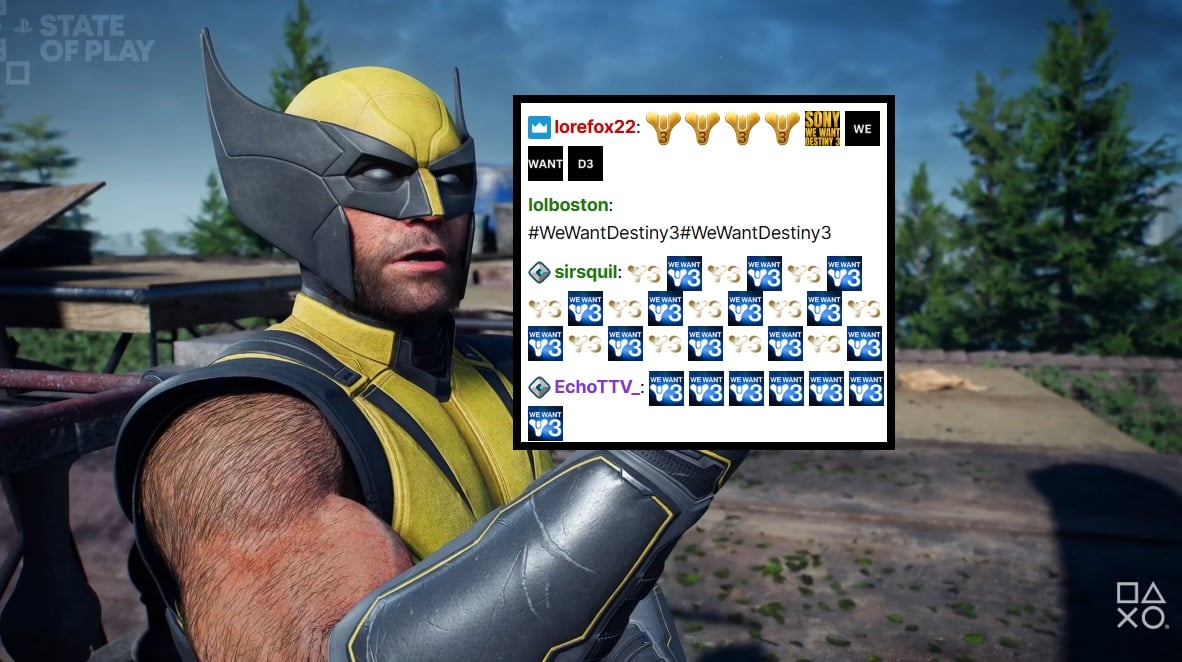

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.

Microsoft Unveils ASSERT, Simplifying AI Behavior Testing with Text

Microsoft has launched ASSERT, an open-source framework designed to simplify AI behavior testing. It enables developers to create comprehensive, application-specific evaluations using natural language descriptions, ensuring AI systems act as intended for particular products and services. The tool translates high-level goals into structured tests, generates scenarios, scores results, and logs execution paths.

Trump Orders Voluntary AI Model Review Before Release

President Trump has signed an executive order creating a voluntary framework for AI companies to share advanced models with the federal government before release. This initiative aims to bolster secure innovation and protect critical infrastructure, reflecting a shift from the administration's previous hands-off approach to AI safety. Companies opting for pre-release review may receive confidentiality protections.