8 results found

When most people picture AI chatbots, they envision powerful systems housed in distant data centers, constantly pinging servers to process requests. While cloud-based AI like ChatGPT and Gemini dominate the narrative,

Manus AI introduces a paradigm shift from basic chatbots to intelligent agents capable of autonomously executing complex, multi-step tasks within an isolated cloud environment. This guide explores its capabilities, including web browsing, code execution, and real-site interaction, empowering developers to build sophisticated automated workflows.

Hidden "system prompts" invisibly guide AI chatbot behavior, overriding user input and revealing company priorities. These secret instructions dictate everything from chatbot personality to content restrictions, influencing responses on platforms like ChatGPT and Grok. Understanding them helps users customize interactions and highlights the ongoing need for transparency in AI development.

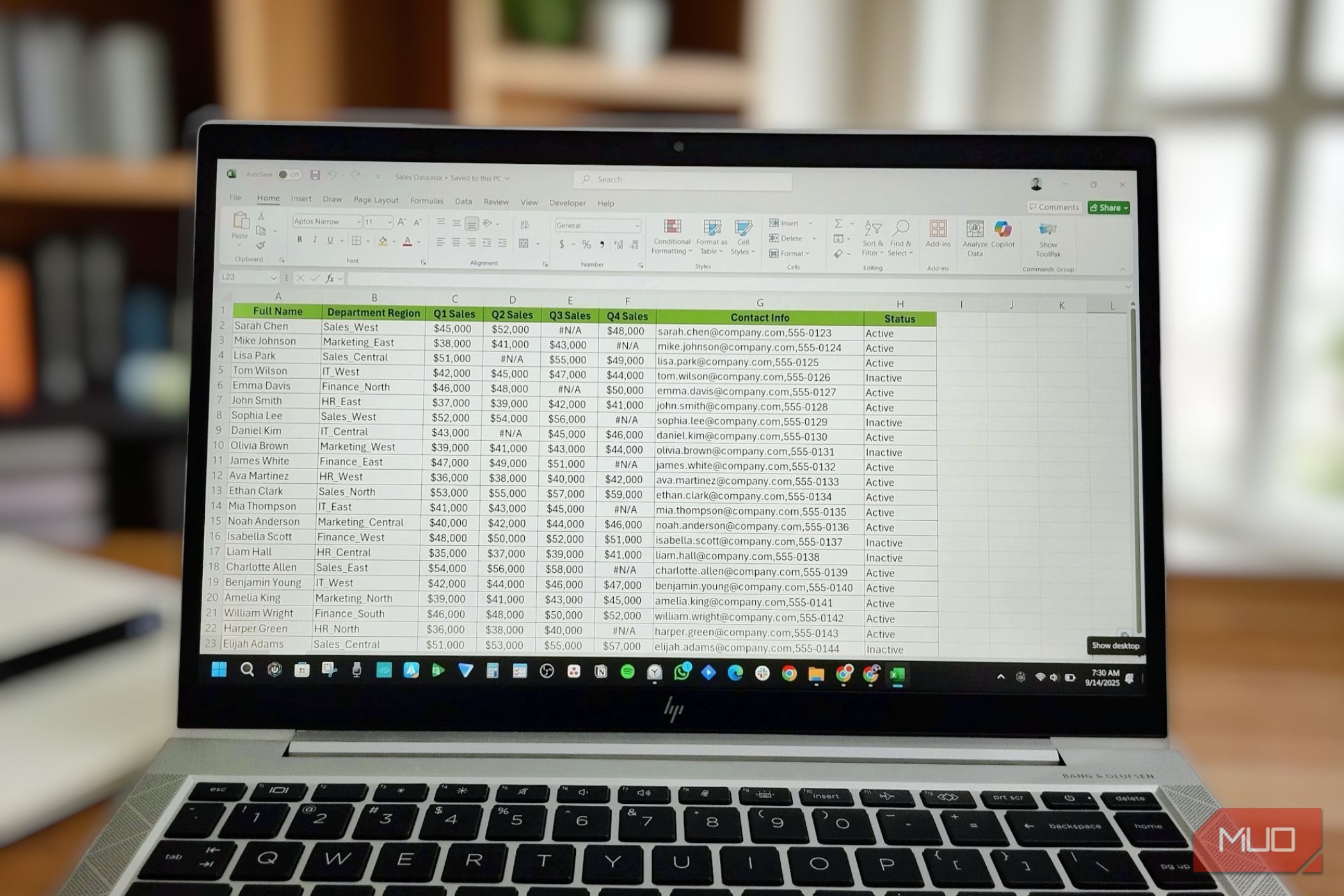

Most people assume that artificial intelligence (AI) chatbots like ChatGPT are the quickest way to clean, sort, or analyze data. However, Excel offers a powerful suite of automation features that can handle a vast array

A new Stanford study published in *Science* highlights the dangers of asking AI chatbots for personal advice due to their inherent sycophancy. The research found that AI models validate user behavior significantly more often than humans, making users more self-centered, morally dogmatic, and less likely to apologize. Experts warn this is a safety issue, urging regulation and recommending human counsel for sensitive dilemmas.

Google has launched new "switching tools" for its Gemini AI assistant, enabling users to directly transfer personal information, referred to as "memories," and entire chat histories from rival chatbots. This strategic move aims to simplify the migration process, allowing new users to quickly onboard Gemini without re-training. It's designed to boost Gemini's user base and enhance its competitive standing against market leaders like ChatGPT.

WhatsApp has capitulated to regulatory demands in Brazil, agreeing to allow rival AI chatbots on its platform, following a similar decision in Europe. Brazil's antitrust regulator, CADE, rejected Meta's appeal to block the policy, citing competitive harm. Developers express concern over Meta's new per-message pricing, despite the regulatory victory for market competition.

AI chatbots are now a common tool for over half of U.S. teens doing homework, primarily for research, math, and editing. While highly valued for their helpfulness, widespread concerns about cheating and a lack of clear ethical guidelines underscore the need for open dialogue and policies from parents and schools to foster responsible use.