Securing AI-Assisted Coding: Why Containers and Sandboxes are

AI-assisted coding is advancing beyond simple suggestions to complex agentic systems. To manage inherent risks, robust security and isolation are crucial. Hardened containers, which are minimal and secure, coupled with agent sandboxes, provide the necessary environment for AI agents. This approach treats AI agents with the same rigor as microservices, ensuring predictability and trust in AI-driven workflows.

The integration of AI into our development workflows is rapidly evolving, moving beyond simple code suggestions to more complex, agentic systems that can actively assist in coding tasks. While the promise of AI-driven efficiency is exciting, relying solely on a "good vibe" from an AI without robust controls can introduce significant risks. As fellow developers, we understand the critical need for security, predictability, and isolation in our tools. This is where the principles of hardened containers and agent sandboxes become not just beneficial, but essential, especially when AI agents begin to resemble our familiar microservices.

The Shift to Agentic Workflows and the Need for Trust

Modern AI agents are becoming increasingly sophisticated, capable of not just generating code snippets but also potentially interacting with our development environments, executing tasks, and even proposing structural changes. This elevates their role from passive assistants to active participants, much like small, specialized services running within our systems. As Mark Cavage, President and COO of Docker, points out, these agents are "starting to look a lot like microservices." This comparison is crucial because it implies a similar set of requirements for reliability, security, and management that we apply to our conventional microservices.

Without proper isolation, an AI agent could, intentionally or unintentionally, introduce vulnerabilities, consume excessive resources, or even compromise sensitive data. The ephemeral and often black-box nature of AI outputs means we need a safety net. We can't simply trust the "vibes" of an AI; we need tangible mechanisms to ensure its operations are contained and predictable.

Hardened Containers: The Foundation of Secure AI

At the core of this strategy are hardened containers. The concept is straightforward yet powerful: these are "minimal and secure" containers designed to reduce the attack surface and enhance the integrity of the application running within them. For AI agents, this means:

- Minimality: A hardened container includes only the absolutely necessary components for the AI agent to function. This drastically cuts down on unnecessary dependencies, libraries, and tools that could harbor security vulnerabilities. Less code means fewer potential exploits.

- Security: These containers are built with security best practices in mind, often incorporating configuration and runtime hardening to prevent common attack vectors. When an AI agent operates within such an environment, the risk of it being compromised or used as an entry point into your broader system is significantly diminished.

Docker Hardened Images are highlighted as a direct solution, offering pre-configured, secure base images for various applications, including those powering AI agents. By starting with a hardened base, developers can build their AI agents on a trusted, secure foundation, rather than having to implement complex security configurations from scratch.

Agent Sandboxes: Isolating AI for Predictability and Safety

Beyond the container itself, the concept of an "agent sandbox" provides another critical layer of isolation. A sandbox is essentially a confined environment where an AI agent can perform its tasks without having unrestricted access to the host system or other network resources. This is particularly vital for AI agents that might execute code, interact with APIs, or process sensitive data.

Consider an AI agent tasked with refactoring code or fixing bugs. In a sandbox, it could apply changes to a temporary, isolated copy of the codebase, allowing developers to review and approve its suggestions before they affect the main project. If the AI makes an error or produces an unexpected output, the impact is contained within the sandbox, preventing cascading failures or data corruption.

Docker for AI is presented as a solution specifically designed to "build, run, and secure AI agents," implying that it facilitates the creation and management of these sandboxed environments. By leveraging established containerization technologies, developers can ensure that their AI agents operate within clearly defined boundaries, mitigating risks associated with unpredictable AI behaviors.

Practical Takeaways for Developers

For developers integrating AI into their workflows, the message is clear: treat your AI agents with the same architectural rigor you'd apply to any critical microservice.

- Prioritize Isolation: Always run AI agents, especially those with execution capabilities, within isolated environments. Containers are your primary tool here.

- Embrace Hardening: Utilize hardened container images to minimize attack surfaces and build security by default. Don't add unnecessary components to your AI agent's runtime environment.

- Define Clear Boundaries: Use sandboxing techniques to control what your AI agents can access and modify. Restrict network access, file system permissions, and resource consumption to only what is absolutely essential for its function.

- Reproducibility and Auditability: Containerization also brings the benefit of reproducibility. A containerized AI agent can be reliably deployed and run in any environment, and its behavior can be more easily audited and debugged.

This approach transforms AI-assisted coding from a potentially risky endeavor into a controlled, secure, and ultimately more productive process. It allows us to harness the power of AI while maintaining the high standards of software quality and security that define professional development.

Looking to the Future

The role of containers in agentic workflows is only set to grow. As AI agents become more sophisticated and autonomous, the need for robust, secure, and scalable deployment environments will become even more pronounced. The convergence of AI agent design with microservice architecture means that the tools and practices we've refined for distributed systems will be directly applicable to managing our intelligent assistants. This strategic integration ensures that AI becomes a trusted, dependable partner in our development journey, rather than a wildcard.

FAQ

Q: What precisely makes a container "hardened" in the context of AI agents?

A: According to the source, a hardened container is characterized by being "minimal and secure." For AI agents, this means it contains only the essential software dependencies required for the agent to run, thereby reducing the potential attack surface. It also implies the application of security best practices in its configuration to enhance its resilience against exploitation.

Q: Why is it stated that AI agents are "starting to look a lot like microservices"?

A: This comparison highlights that as AI agents become more complex and perform specific, discrete tasks within a larger system, they begin to share architectural characteristics with microservices. Like microservices, they need to be isolated, independently deployable, manageable, and secure, especially when interacting with other parts of a development environment or production system.

Q: How do agent sandboxes contribute to the security of AI-assisted coding?

A: Agent sandboxes provide a confined and controlled execution environment for AI agents. This isolation prevents an AI agent from having unrestricted access to the host system or sensitive data, containing any unintended or potentially malicious actions. If an AI agent generates flawed code or attempts an unauthorized operation, the sandbox limits the impact to its isolated environment, ensuring that the broader development workflow remains secure and stable.

Related articles

D&D's Dungeon Masters Finale Trailer Drops: Ravenloft Showdown Looms

D&D's official actual play show, Dungeon Masters, is gearing up for its epic Ravenloft campaign finale. An exclusive animated trailer teases the party's ultimate confrontation with the legendary Lord Soth, promising a nail-biting conclusion to their perilous journey through the Realm of Dread.

INIU SnapGo Air 10000mAh Review: Slim, Fast, and Seamless Magnetic

Our INIU SnapGo Air 10000mAh review delves into this Qi2.2 magnetic power bank. It’s remarkably slim, offers rapid 25W wireless and 45W wired charging, and seamlessly integrates into daily use, promising to end slow charging woes.

8 ChatGPT Tricks: Unlock Your AI's Full Potential

Quick Verdict For anyone looking to move beyond basic queries with ChatGPT, the "8 ChatGPT tricks" guide by Android Authority serves as an invaluable roadmap. It highlights a collection of practical habits that

Applied Aerospace & Defense Raises $650M in Highly Sought-After IPO

Applied Aerospace & Defense, a Huntsville-based firm, successfully raised $650 million in an IPO that was ten times oversubscribed, pricing shares at $20. The offering underscores a strong investor shift towards defense hardware and solidifies the company's $3.4 billion market valuation. Trading begins Wednesday on the NYSE under AADX.

Trump Signs Executive Order for Voluntary AI Model Oversight

President Trump signed an executive order Tuesday, establishing voluntary government oversight for new AI models. This reverses his prior hands-off approach, balancing innovation with national security by asking companies for a 30-day review.

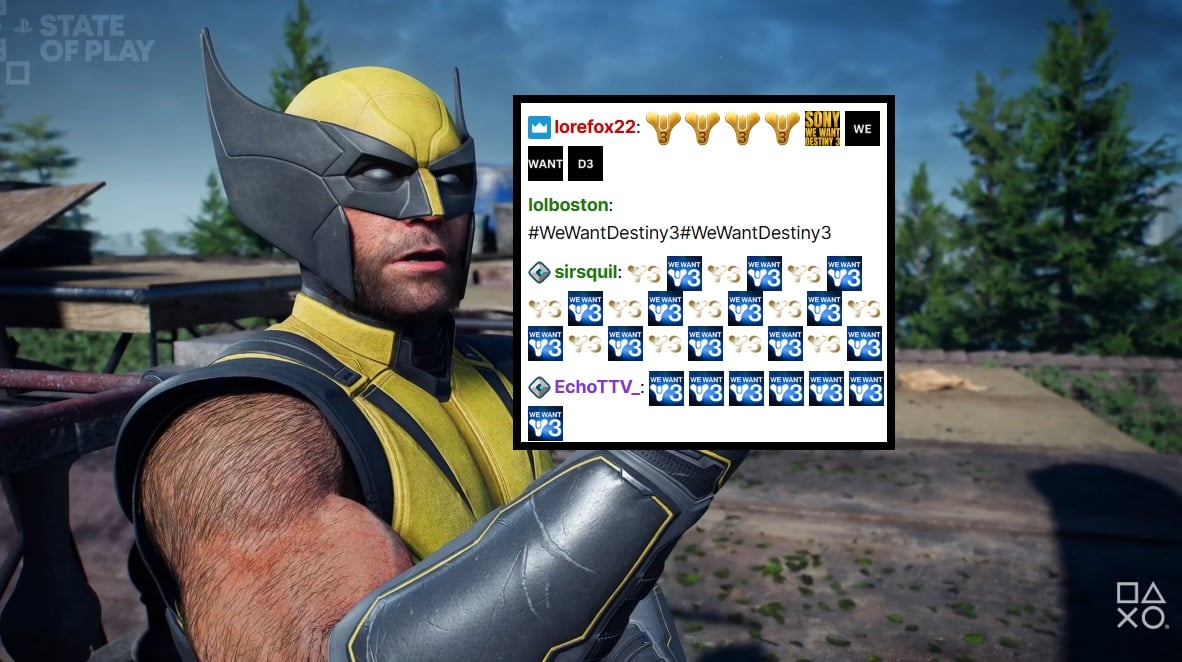

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.