Anthropic's Claude: Pentagon's AI of Choice Amid Ethical Debate

Anthropic's Claude is being used by the Pentagon for critical intelligence and battle simulations, sparking controversy over its "red lines" for military use, even as consumer popularity soars.

Anthropic's Claude finds itself at the heart of a complex ethical and operational dilemma, serving as a critical tool for the Pentagon's Central Command (CENTCOM) even as the company publicly maintains strict "red lines" against certain military applications. This isn't a simple software review; it's an examination of a powerful AI's paradoxical deployment, highlighting the intense pressures and realities facing advanced AI developers. Despite facing accusations of "duplicity" and "betrayal" from top defense officials, Claude continues to provide essential services for intelligence assessments and battle simulations, suggesting its practical value currently outweighs ideological friction. This analysis delves into Claude's controversial military utility, its rapidly growing consumer appeal, and the broader implications for AI development and deployment.

The High-Stakes Ethical Tug-of-War

The dispute over Anthropic's Claude escalated publicly when Secretary of Defense Pete Hegseth denounced the company, citing its "defective altruism" and "duplicity" regarding military use. Hegseth criticized Anthropic's firm stance against hypothetical future applications like mass surveillance or fully autonomous weaponry, labeling the company a supply-chain risk and banning its products for military contractors. Initially, he indicated a six-month transition period for the Department of War to shift to another service.

However, amidst reports of an impending major conflict, the Pentagon reportedly continued its engagement with Anthropic, as detailed by the Wall Street Journal and Axios. This suggests that Claude's immediate operational value outweighed the public condemnation and the company's stated ethical reservations for critical military functions. Hegseth's previous strong statements, calling Anthropic's position a "betrayal," highlight the deep tension between the tech company's principles and the military's pragmatic needs.

Key Capabilities & Military Application

The core "product" under review is Anthropic's Claude, an advanced AI model that CENTCOM reportedly employs for several crucial functions. These include:

- Intelligence Assessments: Claude is utilized to analyze vast amounts of data, distilling complex information into actionable insights.

- Target Identification: The AI assists in the intricate process of identifying potential targets, likely enhancing precision and efficiency.

- Simulating Battle Scenarios: Claude runs sophisticated simulations to model various military engagements, helping planners understand potential outcomes and strategize effectively.

These applications are highly sensitive and integral to military planning and execution, underscoring Claude's advanced capabilities and the significant reliance placed upon it by a major defense entity. Notably, Anthropic CEO Dario Amodei has publicly stated that the company remains interested in collaborating with the Pentagon, provided such uses align with their established "red lines." This suggests current military deployments are, at least in Anthropic's view, within those ethical boundaries.

Performance & User Experience: Beyond the Battlefield

From the Pentagon’s perspective, Claude’s continued deployment, even in the face of public ethical challenges and a top official's denunciation, speaks volumes about its perceived performance and indispensable nature. It strongly implies that Claude delivers substantial value in complex, high-stakes environments where accuracy, speed, and analytical depth are paramount. The decision to maintain its use suggests that Claude provides critical capabilities that are not easily or quickly replicated by alternatives.

Coincidentally, Claude’s public profile has also seen a significant surge in popularity. Following public commentary from Donald Trump, the consumer-facing Claude mobile app rapidly ascended the charts, reaching the number one spot on the US Apple App Store, surpassing ChatGPT as the most downloaded app. Anthropic spokesman Ryan Donegan noted that daily signups for Claude have tripled over the past four months. This dual success—critical military utility and booming consumer adoption—highlights Claude’s robust and versatile capabilities across diverse application types.

Pros and Cons of Anthropic's Claude (in this context)

Pros:

- High Utility & Performance: Demonstrates significant value for complex, high-stakes military operations like intelligence analysis, target identification, and battle simulations.

- Operational Continuity: Its continued use by CENTCOM, despite public friction, underscores its operational effectiveness and potential indispensability.

- Strong Public & Consumer Appeal: Rapidly growing popularity and chart-topping performance in consumer markets (e.g., Apple App Store) indicate strong user adoption and ease of use.

- Ethical Framework (Stated): Anthropic aims to balance innovation with ethical considerations through its "red lines," which may appeal to certain users and investors committed to responsible AI development.

Cons:

- Ethical & Reputational Risk: The company faces accusations of "duplicity" and "betrayal" from high-level government officials for its stance on military use, potentially damaging its brand.

- "Supply-Chain Risk" Designation: Labeled a supply-chain risk by the Secretary of Defense, which could impact future government contracts and perceived reliability for other sensitive sectors.

- Ambiguity of "Red Lines": The precise definition and enforcement of Anthropic's "red lines" remain somewhat unclear, especially given ongoing military use, potentially leading to distrust.

- Government Dependency vs. Principles: The situation highlights a difficult balance for AI companies between adhering to ethical principles and the significant operational reliance by powerful government entities.

Comparison with Alternatives

When evaluating advanced AI services for both enterprise and government use, OpenAI's offerings, including ChatGPT, present a notable alternative. While both companies develop powerful large language models, their approaches to government and military engagement appear to diverge significantly.

| Feature / Aspect | Anthropic's Claude | OpenAI's ChatGPT |

|---|---|---|

| Stated Military Stance | Firm "red lines" against mass surveillance or fully autonomous weaponry; CEO seeks alignment with these. | Deepening bond with Pentagon; new agreement for classified military use cases. |

| Government Engagement | Active use by CENTCOM despite public ethical conflict and denunciation from SecDef. | Publicly announced deepening bond and new agreement for military applications. |

| Operational Control | "May have wanted more operational control" than OpenAI, according to OpenAI CEO. | Seemingly more willing to cede operational control in military contexts. |

| Ethical Framework | Emphasizes "defective altruism" and ethical considerations in its terms of service. | Less public emphasis on specific "red lines" regarding military use; more on collaboration. |

| Public Controversy | Major public dispute with Secretary of Defense over military use policies. | Less public controversy regarding military applications; more collaborative narrative. |

| Consumer Popularity | #1 free app on US Apple App Store; daily signups tripled in 4 months. | Surpassed by Claude in recent App Store rankings (though historically very popular). |

This comparison highlights that while both provide leading AI capabilities, they offer distinctly different philosophical and practical pathways for clients, particularly those in sensitive sectors like defense. Anthropic appears to navigate a more contentious relationship, holding firmer to its principles while still providing critical services. OpenAI, conversely, seems to be actively pursuing deeper integration and collaboration with defense organizations.

Buying Recommendation

For enterprises and government agencies considering advanced AI models like Claude, the decision hinges on a careful evaluation of operational needs versus ethical alignment and potential public perception.

-

If your priority is cutting-edge AI capabilities for critical analytical tasks (such as intelligence, simulations, or target identification) and your organization is willing to navigate potential ethical ambiguities or public scrutiny, Anthropic's Claude has demonstrated its utility even under high pressure. Its proven performance in challenging military contexts and surging consumer popularity underscore its robust and versatile nature. However, be prepared for potential supply-chain risks or public relations challenges stemming from Anthropic's "red lines" and their dynamic interpretation.

-

If your organization requires deep integration with defense applications, values a more overtly collaborative approach with AI developers, and prioritizes seamless adoption without public ethical friction, OpenAI's offerings might present a more straightforward path, given their stated deepening bond with the Pentagon.

For individual users, Claude's recent surge in popularity and its user-friendly mobile app make it a compelling choice for general AI assistance, text generation, and conversational tasks, largely separate from the specific ethical quandaries of military deployment. Ultimately, Claude is a powerful tool. Its "purchase" decision for high-stakes users comes with a unique set of considerations beyond typical software procurement, balancing functionality, corporate values, and geopolitical realities.

FAQ

Q: Is Anthropic's Claude actually being used by the military despite the company's "red lines"? A: Yes, according to reports from the Wall Street Journal and Axios, the Pentagon’s Central Command (CENTCOM) is currently using Anthropic’s Claude for various purposes, including intelligence assessments, target identification, and simulating battle scenarios. Anthropic's CEO has stated they are still interested in working with the Pentagon as long as it aligns with their "red lines."

Q: How does Anthropic's stance on military use compare to OpenAI's? A: Anthropic has publicly maintained "red lines" against uses like mass surveillance or fully autonomous weaponry, leading to conflict with the Secretary of Defense. OpenAI, on the other hand, has announced a deepening bond with the Pentagon through a new agreement involving military applications in classified use cases, suggesting a more direct and collaborative approach to defense sector engagement.

Q: Has the controversy impacted Claude's popularity with general consumers? A: Paradoxically, the public controversy, specifically comments from Donald Trump, coincided with a significant surge in Claude’s consumer popularity. The Claude mobile app reached the #1 spot on the US Apple App Store, surpassing ChatGPT, and daily signups have reportedly tripled in the past four months.

Related articles

INIU SnapGo Air 10000mAh Review: Slim, Fast, and Seamless Magnetic

Our INIU SnapGo Air 10000mAh review delves into this Qi2.2 magnetic power bank. It’s remarkably slim, offers rapid 25W wireless and 45W wired charging, and seamlessly integrates into daily use, promising to end slow charging woes.

Bean's Inceptin Receptor Bio-Defense: A Promising Natural Shield

Quick Verdict Imagine a plant that not only detects when it's being eaten but actively calls in aerial reinforcements to deal with the threat. That's essentially what researchers have uncovered in common bean plants.

8 ChatGPT Tricks: Unlock Your AI's Full Potential

Quick Verdict For anyone looking to move beyond basic queries with ChatGPT, the "8 ChatGPT tricks" guide by Android Authority serves as an invaluable roadmap. It highlights a collection of practical habits that

MTD Quarterly Reporting: A Stress Test for UK Tax Tech

Verdict: Ambitious but Risky Transformation HMRC’s Making Tax Digital (MTD) for Income Tax represents one of the UK government's most significant digital transformation projects to date. Its move to mandatory quarterly

Google's Android Safety Features for Kids: A Welcome Update

Google is bringing vital Personal Safety app features like lock screen emergency info and car crash detection to kids' Android phones, plus Safety Check and real-time location sharing for teens. This significant June Android Drop update offers much-needed peace of mind for parents.

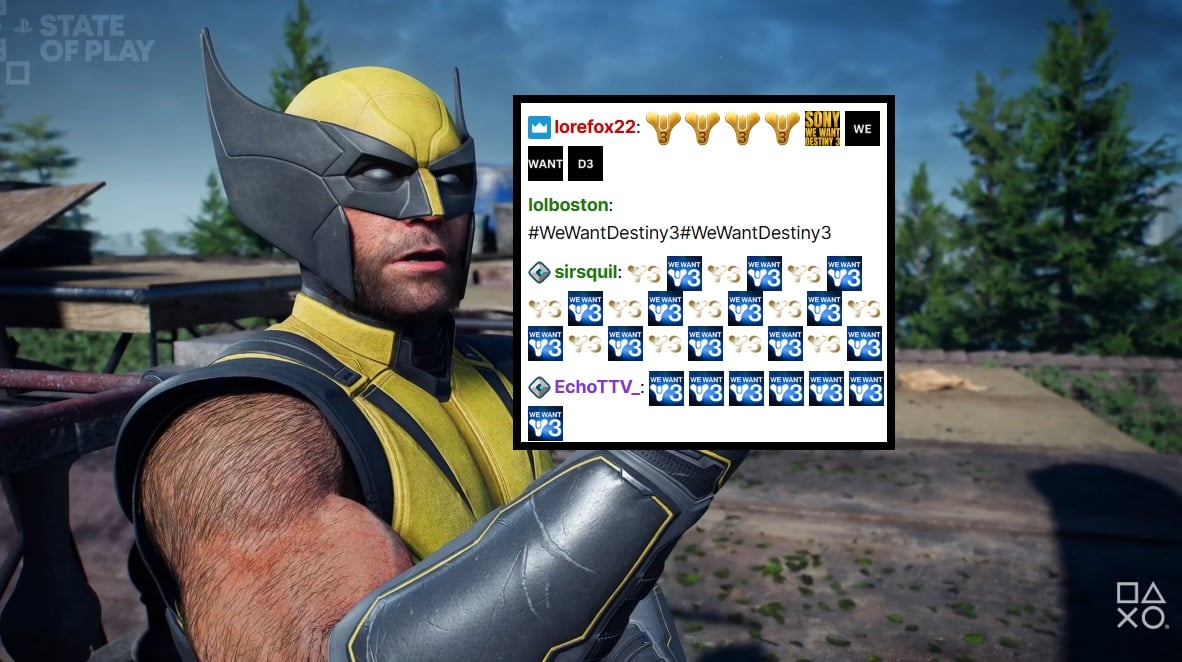

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.